Introduction

Today, the container landscape is rather crowded and Docker is not the predominant player anymore.

The goal of this ticket is to present different products and/or projects and/or vendors that are part of the containers landscape and classify them using the existing standards.

For this classification I will use the standards from Open Container Initiative (OCI) and Cloud Native Computing Foundation (CNCF).

Open Container Initiative

The goal of the Open Container Initiative (OCI) is to promote a set of common, minimal, open standards and specifications around container technology more precisely container formats and runtime . At the moment of this writing it offers the following standards:

Cloud Native Computing Foundation

The goal of Cloud Native Computing Foundation (CNCF) is to drive adoption of cloud native technologies (Containers, service meshes, microservices, immutable infrastructure) by fostering and sustaining an ecosystem of open source, vendor-neutral projects. In the specific case of containers we will focus on the Container Runtime Interface (CRI).

If you wonder if there is any link between OCI and CNCF, the answer is that both initiatives are operating under the Linux Foundation umbrella, the OCI focusing only on the container formats and runtime.

OCI Image Specification

The image specification defines the structure of an OCI Image which should contain a manifest, an image index (optional), a set of filesystem layers, and a configuration. The goal of this specification is to enable the creation of interoperable tools for building, transporting, and preparing a container image to run.

In order to see the content of an OCI image the following command could be used (for a nginx image in this example):

docker save nginx > nginx.tar

tar -xvf nginx.tarThe content of the tar file will look like:

71388...b14.json

c827b...b2014e92/

c827b...b2014e92/VERSION

c827b...b2014e92/json

c827b...b2014e92/layer.tar

manifest.json

repositories

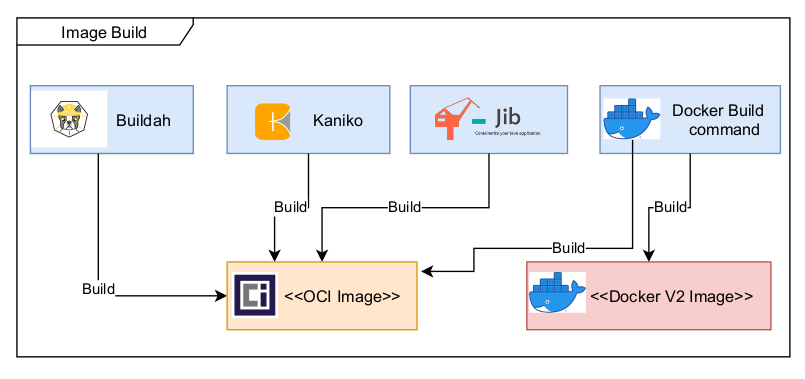

On the tooling side here are a few tools that are able to generate OCI compliant images and this list is far from being exhaustive:

- Kaniko – tool to build container images from a Dockerfile, inside a container or Kubernetes cluster. Kaniko doesn’t depend on a Docker daemon and executes each command within a Dockerfile completely in userspace. This enables building container images in environments that can’t easily or securely run a Docker daemon, such as a standard Kubernetes cluster. The tool was created by Google.

- Jib – tool to build Docker and OCI images for your Java applications without a Docker daemon. It is available as plugins for Maven and Gradle and as a Java library. The tool was created by Google.

- Buildah – tool to build OCI images from a Dockerfile that is daemonless and rootless. It is also capable to generate a pod file from one or more images and also mimic the execution of a pod.

OCI Runtime Specification

The runtime specification goal is to specify the configuration, execution environment, and lifecycle of a container. A container’s configuration is specified as the config.json for the supported platforms and details the fields that enable the creation of a container.

The execution environment is specified to ensure that applications running inside a container have a consistent environment between runtimes along with common actions defined for the container’s lifecycle.

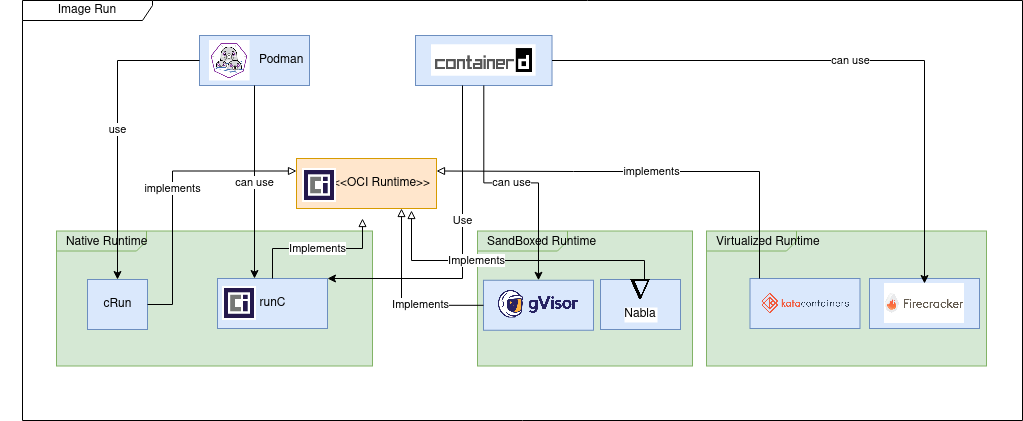

From the implementation point of view we can distinguish three types of runtimes.

Native runtimes are using the host kernel to run the containers. The isolation of containers which are sharing the same kernel can be improved by using “out of the box” security mechanisms like seccomp, AppArmor or SELinux.

The most used native container runtimes projects today are (a few other existed in the past but now are deprecated: railcar, rkt):

- runC – the de-facto standard container runtime maintained by Docker under Apache 2 license

- cRun – created and maintained by RedHat is part of the Podman/Buildah family tools.

Sandboxed runtimes instead of sharing the host kernel, the containerized process runs on a kernel proxy layer, which then interacts with the host kernel on the container’s behalf. Because of this increased isolation, these runtimes have a reduced attack surface and make it less likely that a containerized process can “escape” from the original container.

- gVisor

- gVisor provides a virtualized environment in order to sandbox containers. The system interfaces normally implemented by the host kernel are moved into a distinct, per-sandbox application kernel in order to minimize the risk of a container escape exploit.

- To do this, a component of gVisor called the Sentry intercepts syscalls from the application. Sentry is heavily sandboxed using seccomp, such that it is unable to access filesystem resources itself. When it needs to make systemcalls related to file access, it off-loads them to an entirely separate process called the Gofer. Even those system calls that are unrelated to filesystem access are not passed through to the host kernel directly but instead are reimplemented within the Sentry. Essentially it’s a guest kernel, operating in user space.

- Nabla

- A containerized application can avoid making a Linux system call if it links to a library OS component that implements the system call functionality. Nabla containers use library OS (aka unikernel) techniques, specifically those from the Solo5 project, to avoid system calls and thereby reduce the attack surface. Nabla containers only use 7 system calls; all others are blocked via a Linux seccomp policy.

Virtualized runtimes takes a different approach to achieve container isolation; the goal of virtualized runtimes is to create a lightweight virtual machines on which the host kernel and application will run.

- Kata Containers

- The idea with Kata Containers is to run containers within a separate virtual machine. This approach gives the ability to run applications from regular OCI format container images, with all the isolation of a virtual machine.

- Kata uses a proxy between the container runtime and a separate target host where the application code runs. The runtime proxy creates a separate virtual machine using QEMU to run the container on its behalf.

- Firecracker

- Is a virtual machine offering the benefits of secure isolation through a hypervisor and no shared kernel, but with startup times around 100ms.

- Firecracker designers have stripped out functionality that is generally included in a kernel but that isn’t required in a container like enumerating devices. The main saving comes from a minimal device model that strips out all but the essential devices.

OCI Distribution Specification

The Open Container Initiative Distribution Specification (a.k.a. “OCI Distribution Spec”) defines an API protocol to facilitate and standardize the distribution of content. The specification is designed to be agnostic of content types, OCI Image types being currently the most prominent.

The specification is strongly inspired from the Docker Registry HTTP API V2.

The Github repository notes four potential use cases:

- Artifact Verification: Help enable trust between registries.

- Resumable Push: Improve communication to help reduce re-uploading for transfers interrupted midway.

- Resumable Pull: Similarly, it could help reduce unneeded downloads on the incoming side.

- Layer Upload Deduplication: The spec could help container registries avoid duplicating layers.

On the tooling side, most probably any tool/vendor that offers support for Docker Registry Api will also support the new specification.

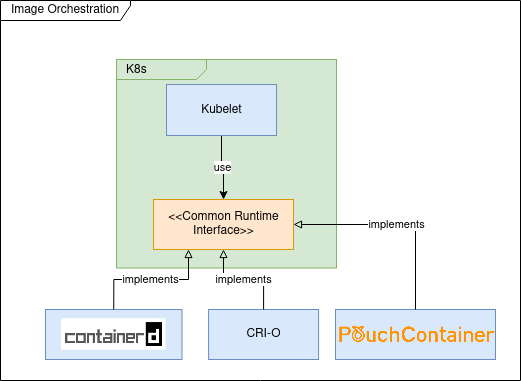

CNCF Container Runtime Interface (CRI)

In the first versions of Kubernetes (prior to 1.5) Docker was used as (default and only) container runtime engine; the usage of Docker was hardcoded into the Kubelet (k8s component that is installed and running on each cluster node having as goal to ensure that the containers described by Pod specifications are running and healthy).

The goal of CRI was to define an API that any container runtime should implement in order to be used by Kubelet.

The most important tools implementing CRI are :

- containerd is Docker high-level container runtime, able to push and pull images, manage storage and define network capabilities. It is also capable of managing the lifecycle of running containers by passing corresponding commands to a low-level container runtime like runc.

- CRI-O is the RedHat implementation of CRI and is the default container runtime for OpenShift since version 4. CRI-O has less features compared to containerd and delegates to components from “Container Tools” project for image management and storage. Most probably as a low level runtime container it’s using cRun.

Most probably my previous list is not exhaustive and other projects are implementing the CRI specification; for example PouchContainer which is container engine open sourced by Alibaba. If you are interested in knowing how the CRI implementation is done you could read this: Design and Implementation of PouchContainer CRI.

You must be logged in to post a comment.